|

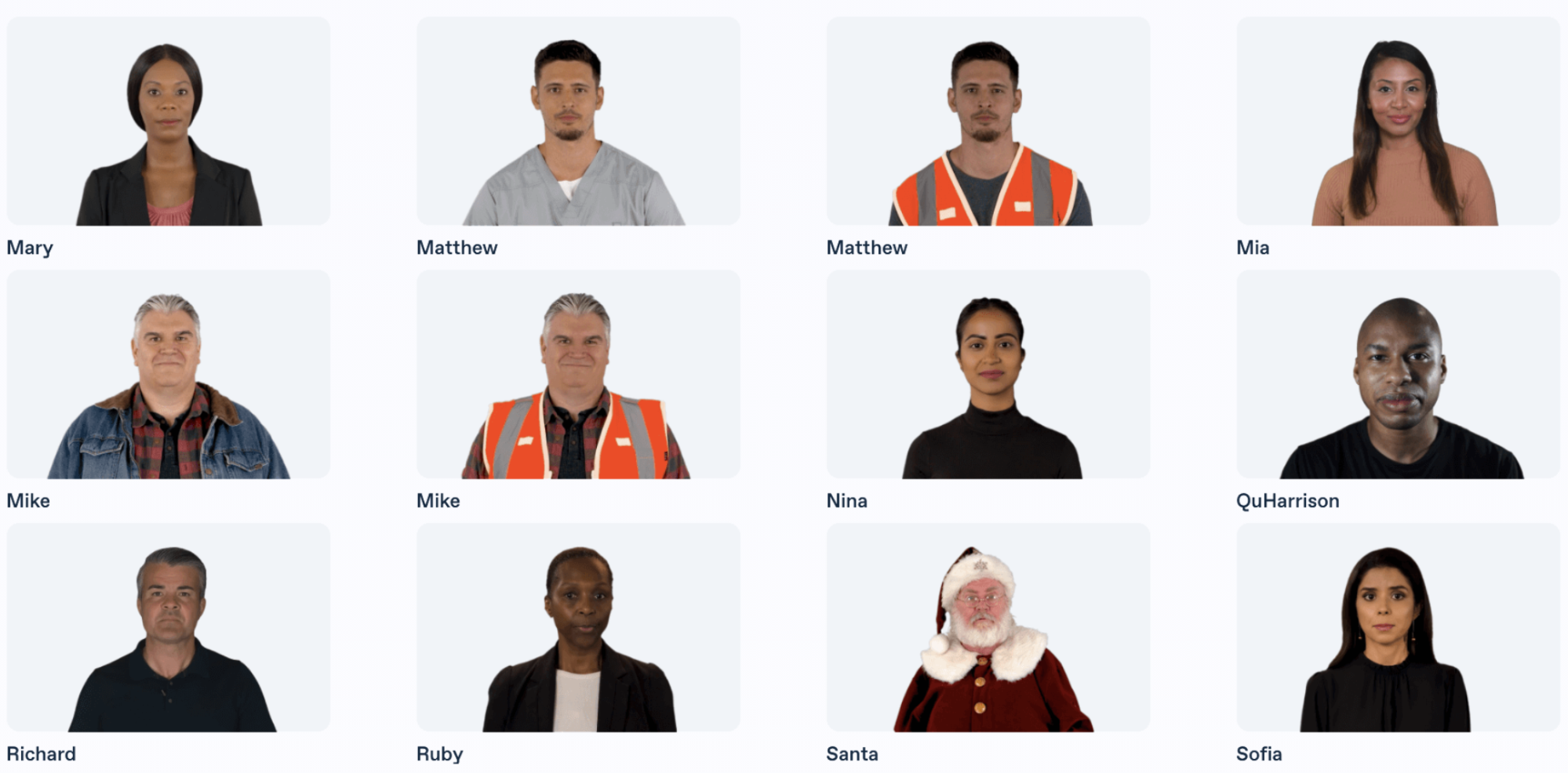

And we can do this in a matter of hours, not months or years."īy using machine learning, Reuters' and Synthesia's system can simulate how a real presenter would. Riparbelli adds that, by utilizing machine learning, and in particular generative adversarial networks, "we can train our system (rather than manually instructing it) to accurately simulate new video of the presenter. "This is much like you would do in Hollywood to create a digital character (think Benjamin Button or digital characters in sci-fi flicks), but rather than taking months or years to create a scene we are able to do this in a matter of hours." "The system uses AI to first analyse the video and build a digital copy of the presenter," he tells me. These reports are barely indistinguishable from the real thing, and Cohen reports that early witnesses to the system (mostly Reuters' clients) have been dutifully impressed.īut how exactly does Reuters' and Synthesia's new system exploit artificial intelligence? Well, according to Synthesia's CEO and co-founder Victor Riparbelli, it revolves around the use of digital twins and generative adversarial neural networks. In many ways, the AI avatar video platforms are where generative text, speech, images, and video converge.In other words, having pre-filmed a presenter say the name of every Premier League football team, every player, and pretty much every possible action that could happen in a game, Reuters can now generate an indefinite number of synthesized match reports using his image. Some software companies in the space, such as Hour One and D-ID, have also introduced generative text and image features by tapping into OpenAI GPT-3 APIs.

So, the generative AI label is appropriate. More specifically, the process of matching the spoken script with the avatar's mouth movements and emotional expressions is another layer of AI. In addition, the rendering of the avatar and its movements are often now the result of AI model training. Text-to-speech is, by definition, a generative AI technology. Synthedia’s view is that “digital people” are indeed a category of generative AI and synthetic media.Īt a basic level, nearly all of the videos in this space use synthetic speech engines to generate the avatar voices. However, some are unsure whether “digital people” fall into this category or if the software companies just want to be attached to the latest and hottest technology trend. This may also signal that broader industry growth is taking hold after several years of modest traction.Įveryone seems to agree that large language models (LLM) and text-to-image AI models are categories of generative AI. However, Synthesia was already one of the leading companies in the space, so the growth of more than 5x suggests significant traction and recurring customers. It is easy to show strong growth when you are building from a very small base. You can typically ignore large growth rate claims by startups when they do not reveal any nominal figures. Also, the 454% annual growth is a positive sign. NVIDIA’s participation in the round is notable given its strong push in generative AI and historical focus on video rendering. With a year-over-year user growth rate of 456% and over 12 million videos generated on the platform to date, the company has consistently driven triple-digit growth while serving more than 50,000 businesses across the globe. “The round, which values the company at $1 billion, is led by Accel with investment from NVentures, NVIDIA’s venture capital arm, and participation from existing investors Kleiner Perkins, GV, Firstmark Capital, Alex Wang, Olivier Pomel, and Amjad Masad.

The company has raised $157 million to date. Synthesia, the AI avatar video platform (and the company the closest spelling to Synthedia □), announced a $90 million series C funding round.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed